Many scientific discoveries and invention ideas were the result of painstaking research and relentless persistence to solve long-standing problems. In cases like these, a breakthrough is often the brainchild of a team of dedicated scientists determined to provide solutions for known difficulties or give answers to scientific inquiries.

However, there have also been quite a number of important discoveries that happened merely by mistake or were caused by a lack of discipline. Here are some great product design discoveries and inventions that came about thanks to some lucky accidents.

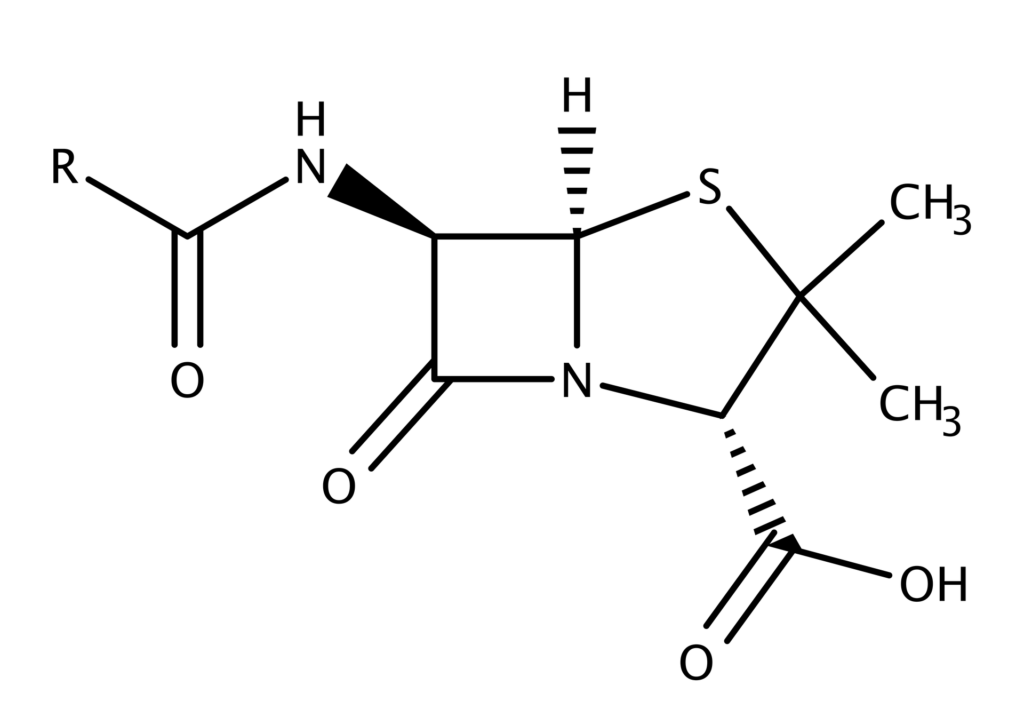

I. Penicillin Antibiotics

I. Penicillin Antibiotics

An invention – or a discovery – of medicine is often driven by necessity rather than ingenuity. Diseases are always a step ahead of the cures, and scientists from all around the world race to come up with effective treatment methods to cure the ill. The same is true about the development of penicillin, although the discovery itself was accidental.

Dr. Alexander Fleming certainly had no intention of revolutionizing the medical world on September 28th, 1928 when he discovered the world’s first antibiotics, yet he managed to do exactly that. Penicillin is a concentrated form of a naturally-occurring active antibacterial substance produced by Penicillium mold.

Prior to the discovery of penicillin, Fleming was already a reputable researcher. He was one of the first scientists to explain that antiseptics used in World War I actually killed more soldiers than infections. Another notable discovery was lysozyme, a naturally occurring antibacterial enzyme present in nasal mucus, saliva, and even tears.

In addition to those, Fleming also developed a reputation as a messy researcher, in the sense that he often left his workplace untidy. His ability to work comfortably in an unorganized laboratory was a blessing for the medical world because it created the perfect environment from which penicillin revealed its presence: a laboratory filled with mold, a pile of Petri dishes, and a brilliant researcher.

Fleming discovered penicillin in London when he returned from a summer vacation in Scotland. He returned from a Scotland trip and arrived in London on September 3rd, 1928. Since many of the Petri dishes were filled with neglected samples, he began taking a closer look at each one of them to see if any could still be salvaged. Of course, he found most of them contaminated and threw them away in a container filled with disinfectant.

It was Fleming’s diligence (or lack thereof) in taking the time to inspect the neglected Petri dishes before throwing the samples away that led to the discovery of penicillin. Some of the Petri dishes contained colonies of Staphylococcus aureus, round-shaped bacterium typically found in the respiratory tract and on the skin. When inspecting the bacterium under a microscope, he realized that a mold called Penicillium notatum had destroyed the bacteria surrounding it. In the area around the mold, there were no bacteria – the mold killed them all.

During the weeks that followed, Fleming grew enough of the same mold to do more testing. He was convinced that something in the mold inhibited and killed the bacteria, but he was not sure what made it happen. After some experimentation, Fleming found that the mold produced a substance with the ability to kill disease-causing bacteria.

He called the substance penicillin. The laboratory where the discovery happened is now preserved as the Alexander Fleming Laboratory, as part of St. Mary’s Hospital in Paddington, London.

It would take 14 more years before the first civilian received penicillin as part of a medical treatment. The lucky patient was Anne Miller, who suffered from a blood-poisoning condition due to a miscarriage. She was lying hopelessly at New Haven Hospital in Connecticut for about a month with no sign of improvements. Doctors tried blood transfusion and surgery to treat her condition to no avail.

The hospital received a tiny amount of a drug containing penicillin (even after more than a decade of discovery, penicillin was still in its experimental phase) and injected Mrs. Miller with it. Her temperature dropped overnight. Her hospital chart is now at the Smithsonian Institution. Between the initial discovery and Miller’s survival, however, there was an important sequence of events.

Contributing Scientists

Contributing Scientists

Fleming will always be credited as the scientist who discovered penicillin. Some go further by saying that he actually saved more lives than anybody else in history. One thing is certain: he could not have done it on his own. Several other scientists played major roles in the development of the drug, including:

- Dr. Howard Florey, a professor of pathology who also served as the director of the Sir William Dunn School of Pathology at Oxford University.

- Dr. Ernst Chain, a biochemist and acutely sensitive man.

- Dr. Norman Heatley, a biologist and biochemist who developed the extraction and purification techniques of penicillin in bulk.

As strange as it may sound, Fleming did not have the necessary chemistry background or laboratory resources to develop penicillin to its fully effective form. The active bacteria-inhibiting substance produced by Penicillium notatum still needed to be isolated and purified to determine its efficacy as a medicine. These tasks fell to Florey and his team, consisting of Chain, Heatley, and Edward Abraham.

Two things that separated Florey from the vast majority of scientists were his ability to convince people in power to grant him research funds, and his expertise at running a sophisticated laboratory or research facility filled with many talented scientists. Together with Chain, they tested penicillin fluid extract on 50 mice that had been infected prior to the test with streptococcus, a deadly bacterium. Half of the mice were treated with the fluid extract and survived.

It was not until 45 years later that Heatley was recognized as the scientist who developed the proper extraction and purification techniques of a large quantity of penicillin. Fleming, Florey, and Chain shared a Nobel Prize in Physiology or Medicine in 1945. Heatley was not one of the recipients.

In 1990, Heatley was awarded an honorary Doctorate of Medicine from Oxford University; it was an unusual distinction, the first to be awarded to a non-medic in the university’s 800-year history.

A laboratory assistant named Mary Hunt also accidentally discovered a specific type of fungus that could produce enough penicillin for bulk production. In fact, the Penicillium chrysogeum could produce 200 times more penicillin than the species Fleming had described over a decade earlier. With mutation-causing X-rays and filtration, the production capacity increased to 1,000 times higher.

In the early stages of World War II, Florey and Chain flew to the United States to work with American scientists to develop a method to mass produce penicillin. During the first five months of 1942, around 400 million units of pure penicillin were produced. It became subsequently known as the wonder drug.

It is interesting to think that Fleming did only a small fraction of the work in the entire development of penicillin as an effective drug to treat infections. As more reporters began to cover stories about early trials of the substance in 1941, people took the liberty to credit Fleming as the sole discoverer (this is not entirely inappropriate because other scientists based their work on Fleming’s discovery) and ignore the contribution of Florey’s team at Oxford; the Nobel Prize in 1945 partially corrected the problem.

To put it in simple words, Fleming discovered penicillin. Florey and Chain did experimentations and tests to determine its efficacy and took the next step: mass production. Those three received and shared the Nobel Prize, but it surely would not have happened without Heatley’s proper extraction and purification methods.

II. LSD (Lysergic Acid Diethylamide) a.k.a. Acid

II. LSD (Lysergic Acid Diethylamide) a.k.a. Acid

Albert Hofmann is most famously associated with the rather unconventional sequence of events that led to the discovery of the psychedelic drug known as lysergic acid diethylamide (LSD). He was born in Switzerland and earned his chemistry degree at the University of Zurich.

He landed a job at Sandoz Laboratories (now a subsidiary of Novartis) in Basel in the pharmaceutical-chemical department of the company. Hofmann was a co-worker of Professor Arthur Stoll, who was also the founder of that pharmaceutical department. One of Hofmann’s primary tasks was to research lysergic acid derivatives for their medicinal properties.

Hofmann successfully synthesized LSD on November 16th, 1938. At the time of the discovery, however, Hofmann’s intention was to synthesize the chemical for use as an analeptic (circulatory and respiratory stimulant). While it was not an accidental discovery, he didn’t become aware of its true powerful effects until five years later. The discovery of the drug’s more dangerous potential was, in fact, accidental.

Due to the lack of scientific interest in the newly discovered LSD, the drug was set aside for about five years. On April 16th, 1943, Hofmann revisited the drug. By accident, he absorbed an amount of LSD from his fingertips and discovered interesting effects. He experienced restlessness mixed with a little bit of dizziness, an intoxicated-like state combined with a stimulated imagination and uninterrupted images of exciting colors. Those effects faded away after about two hours.

Bicycle Day

Bicycle Day

Three days after experiencing a high, Hofmann conducted a self-experiment in which he deliberately ingested 250 micrograms of LSD. This amount, he suggested, was the threshold dose. The actual threshold dose was only 20 micrograms, and he took more than ten times as much. What happened later became known as the Bicycle Day.

Due to the intense psychological effects of the drug, Hofmann asked an assistant to escort him home. Because motorcycle use was prohibited in the country during wartime, they had to ride bicycles. On the journey, Hofmann’s condition rapidly deteriorated, with feelings of anxiety and hallucination taking over.

As the story goes, he came to a point where he thought his next-door neighbor was a witch. When he finally arrived at home, the doctor came to evaluate his condition. The doctor concluded that Hofmann did not suffer from any physical abnormality except for dilated pupils.

The patent for LSD was originally registered under Sandoz Laboratories. Before the patent expired in 1963, the United States Central Intelligence Agency (CIA) started a widespread experiment regarding the use and effects of LSD on humans. One of the most controversial procedures of the experiment was administering LSD without a subjects’ knowledge. Many of the subjects were CIA employees, mentally ill patients, doctors, military personnel, and even members of the general public. Beginning on October 24th, 1968, possession of LSD was considered illegal in the United States. The use of LSD continued to be legal in Switzerland until 1993.

III. Implantable Artificial Pacemaker

III. Implantable Artificial Pacemaker

The artificial cardiac pacemaker, and the next generation implantable variant of it, preceded Wilson Greatbatch’s accidental invention of a smaller yet more practical model of what was also the pacemaker in 1956. The credit to the invention of the first implantable pacemaker goes to Rune Elmqvist, a doctor and engineer who designed and built the device under the direction of Ake Senning, the first surgeon to implant an artificial pacemaker into a human patient on October 8th of the same year.

Greatbatch came up with the idea in 1956, but his device was not used on a human patient until 1960. However, the first successful animal testing using Greatbatch’s implantable pacemaker took place on May 7th, 1958. While Elmqvist soon ceased the development of his design, Greatbatch kept working on his for years and even founded Wilson Greatbatch Ltd. to produce lithium-iodine batteries, the power source of his pacemaker. The company is now known as Integer Holding Corporation.

Similar to the discovery of penicillin by Fleming, the invention of the implantable artificial pacemaker by Wilson Greatbatch was completely accidental. Greatbatch came across the idea of the device when he was working on a different medical instrument intended to monitor heart sounds. He made a mistake in the process, but this mistake led to the invention of a much more useful device: the pacemaker.

In 1952, or two years after graduating from Cornell University with a B.E.E., he started teaching the same subject at the University of Buffalo. It would take four more years until he made the glorious blunder through which he designed and built his pacemaker.

At the University of Buffalo, serving as an assistant professor in electrical engineering, he was working on an electric heart-rhythm recording device in 1956 for the Chronic Disease Research Institute at the university. Approaching the end of the project, he mistakenly installed a type of transistor with much higher resistance than the oscillator required (only a 10 KΩ resistor). As the story goes, he reached into a container filled with various electrical components and grabbed the wrong part after misreading the coding color. What he installed was a 1 MΩ resistor. Although his recording device failed, he realized that the device could be used for another purpose.

At the University of Buffalo, serving as an assistant professor in electrical engineering, he was working on an electric heart-rhythm recording device in 1956 for the Chronic Disease Research Institute at the university. Approaching the end of the project, he mistakenly installed a type of transistor with much higher resistance than the oscillator required (only a 10 KΩ resistor). As the story goes, he reached into a container filled with various electrical components and grabbed the wrong part after misreading the coding color. What he installed was a 1 MΩ resistor. Although his recording device failed, he realized that the device could be used for another purpose.

As soon as the wrong resistor was plugged in, the device began to “squeg” with a 1.8-millisecond pulse. The more interesting part was that the pulse occurred intermittently at the one-second interval during which the device was practically cut off as it drew zero current.

He started to wonder if the oscillator would be more appropriate not as a heart-rhythm recording device as he had intended, but as an instrument to drive the human heart. It is worth mentioning that Greatbatch was a deeply religious man. He claimed that the mistake was not actually a mistake at all, because the Lord was working through him.

As confident as he was, it would take serious efforts to find a doctor, especially a heart surgeon, who welcomed the idea and saw the viability of the device as an implantable pacemaker.

The Bow Tie Team

The Bow Tie Team

About two years later, Greatbatch brought his device to the animal lab at Buffalo’s Veteran Hospital. By this time, the device had been made smaller and was refined in a way that it became shielded from bodily fluids. The chief surgery at the hospital, Dr. William Chardack, took interest in the device.

Together with Dr. Andrew Gage, also a surgeon, they performed one of the first successful attempts at using Greatbatch’s pacemaker design on a dog on May 7th, 1958. As the doctors exposed the dog’s heart to two pacing wires, the heartbeat synced with the device. Chardack, amazed by the procedure, famously exclaimed, “Well, I’ll be damned” to express his feeling.

The three men later became known as the “Bow Tie” team. The two doctors wore bow ties because children were inclined to pull on long ties; on the other hand, Greatbatch wore it because long ties often got in the way when he was soldering things. During the months and years that followed the procedure, they worked together doing research and experimentations.

Over the course of two years, all trials were done with animals. Greatbatch patented his invention in 1959. Finally, in 1960, Chardack reported a successful implantation of the pacemaker in a human. The patient was a 77-year old man suffering from a complete heart block condition. He survived without complications for two more years before passing away from non-cardiac causes. Within just a year following the first successful attempt, the Bow Tie team reported 15 more pacemaker implantation procedures on human patients.

Despite its remarkable success during the first few years of development, Greatbatch’s pacemaker suffered from ineffective batteries and the unreliability of lead as a long-term electrode. Thankfully, those problems were sorted out in the following several years and the improvements helped popularize the pacemaker. Collaborations between doctors, engineers, and patients contributed heavily to its development. Some notable milestones include:

- Paul M. Zoll, one of the pioneers in a cardiac defibrillator, helped found the Electrodyne Company to manufacture heart rhythm monitors and chest-surface pacemakers, among other things.

- Medtronik Inc. started mass production of the Chardack-Greatbatch pacemaker.

- Greatbatch, following collaboration with Medtronik, founded his own company to produce lithium-iodine cells to power his pacemaker.

Throughout his lifetime, Greatbatch was a prolific inventor with 350 patents under his name. Some of his more recent involvements are HIV treatment research and nuclear power as an alternative energy source to reduce dependency on fossil fuels. He was inducted into the National Inventors Hall of Fame in 1986, received a National Technology Medal from President George H.W. Bush in 1990, and earned the Lifetime Achievement Award from Massachusetts Institute of Technology in 1996. He died on September 27th, 2011 at the age of 92.

IV. Superglue

IV. Superglue

The first discovery of what would become superglue happened in 1942 when Harry Coover and his team of scientists were working on various materials to make the perfect clear plastic gunsight for military uses. Among the formulations attempted, Coover could not have been any less excited when he stumbled upon a material that stuck to just about everything it came into contact with. There was no way it could be the ideal material for a clear gunsight, so the team rejected it and moved on.

In 1951, at Eastman Kodak, Coover and a researcher named Fred Joyner worked together to test hundreds of materials in search of the right temperature-resistant coating material to be used in a jet cockpit. One of the procedures involved in the test was to put each compound between two lenses on a refractometer. When Joyner was about to end the testing process for material number 910 on the list, he could not separate the two lenses. He was panicked, which was an understandable reaction since he had just broken expensive laboratory equipment. In the 1950s, a refractometer cost about $3,000 (more than $280,000 in today’s dollars), so it was indeed a serious mistake.

Going Commercial

Going Commercial

Instead of punishing Joyner for destroying the expensive equipment, Coover realized the potential commercial opportunity the discovery offered. The material turned out to be cyanoacrylates. From this, he later developed a form of adhesive, which became available in 1958. The adhesive product was sold as Eastman #910 (later renamed Eastman 910).

In the 1960s, Eastman Kodak sold its cyanoacrylates to Loctite, which then repackaged and rebranded the product as the Loctite Quick Set 404 before sending it to store shelves. However, Loctite eventually became independent from Eastman Kodak in 1971 and built its own manufacturing facility to reintroduce the same adhesive as Super Bonder. Some would say that Loctite ended up exceeding Eastman Kodak’s market share of cyanoacrylates in the 1970s.

In around the same time period, the National Starch and Chemical Company acquired Eastman Kodak’s entire cyanoacrylates business. In addition to this purchase, the company also bought and combined other businesses to form Permabond. Superglue did not make Coover a rich man because the patent had expired before the product became a commercial success. He died on March 26th, 2011 of natural causes at the age of 94.

Coover, the man who invented superglue, was born on March 6th, 1917 in Newark, Delaware. He received his Bachelor of Science in chemistry from Hobart College and his Ph.D. in chemistry from Cornell University. Superglue is only one of 460 patents registered under his name.

In 2004, Coover was inducted into the National Inventors Hall of Fame. He also received a National Medal of Technology and Invention in 2010 from President Barrack Obama. In addition, he received the Maurice Holland Award, Southern Chemist Man of the Year, Earle B. Barnes Award, IRI Achievement Award, Industrial Research Institute medal, and was elected to the National Academy of Engineering.

V. X-Ray

V. X-Ray

Also referred to as Roentgen, named after the man who discovered it, the X-ray was an unintentional finding by a professor at the University of Würzburg in 1895. At the time of the discovery, Wilhelm Conrad Roentgen was 50 years old and had already developed a reputation as a careful experimenter. He obtained the physics chair at the university seven years before he made the discovery.

Roentgen was an avid researcher who enjoyed being left alone in the laboratory. His most important discovery started with his curiosity about a somewhat common (in the scientific community) yet poorly understood device called the Crookes tube. It was an experimental model of an electric discharge tube invented by William Crookes, a British physicist and chemist, around 1870–1875.

The tube was basically a partial vacuum-sealed glass cylinder, housing two electrodes: one anode and one cathode. When a high voltage was applied between the two electrodes, the tube produced a weird glow of light with a yellow-green spot visible on the glass. Although he didn’t manage to come up with a satisfying answer as to what happened inside the tube, he was curious about what happened outside of it.

He deduced that the glow was actually produced by subatomic particles (electrons) escaping the cathode and traveling toward the anode.

There are different accounts of what happened in Roentgen’s lab when he made the discovery. One account suggests that the Crookes tube caused a phosphorous-covered board to glow. It was strange since the board was located a few feet behind him. He tried again, but this time, he covered the tube with heavy black cardboard before he applied a voltage to it with the intention of blocking the light. Even when the tube was completely covered up, the board still continued to glow.

Keep in mind that some scientists came across a similar phenomenon before Roentgen did. However, they were more interested in figuring out the mystery of the tube itself. Most scientists also assumed that the Crookes tube only emitted one type of radiation – one that produced the glow – but Roentgen concluded that another type of radiation was present.

He didn’t know what it was, so he just called it the X-ray. In fact, this radiation was able to pass through solid objects (the cardboard) and excite phosphorous materials (the board). After further experimentation, he understood that the radiation could pass through human tissue too but not bones and metals. One of his first experiments produced a film of his wife’s hand. Despite its obvious advantage for medical purposes, the X-ray was first used for industrial application when Roentgen produced a radiograph of a set of weights inside a box.

The discovery of the X-ray sparked a huge interest not only in the scientific community, but also in the society at large. Scientists tried to replicate the phenomenon using their own methods as the cathode ray also became more popular. People read about it in newspapers and magazines; some stories were true, while others were more fanciful.

Scientists were fascinated with the fact that there was a shorter wavelength than that of light, which opened up more possibilities in the world of physics. People, of course, were amazed at how the radiation passed through solid objects. One thing was certain: the X-ray was a bombshell. The news about the discovery as well as the name of the radiation soon traveled all around the world.

Within just months of the discovery, medical radiographs appeared in many countries across Europe as well as in the United States. In June 1896, six months after Roentgen had made the announcement, physicians began using the X-ray to locate bullets in the body of wounded soldiers. During early years of development, most applications were limited to dentistry or physiology in general. Industrial application required much more efficient and powerful X-ray tubes to produce a radiograph of satisfactory quality.

In 1913, everything changed. William Coolidge, an American engineer and physicist who was also the director of General Electric Laboratory made a major contribution to X-ray development by designing a more powerful model of an X-ray tube with the capability of handling up to 100,000 volts of electricity.

Development kept on going from that moment, and eventually, the world became familiar with X-tubes that operated safely at a million volts in 1931, developed by General Electric. The widespread industrial application really took off when ASME (American Society of Mechanical Engineers) allowed X-ray approval for fusion-welded pressure vessels.

New Radio Activities

New Radio Activities

Within one year after the X-ray was discovered, a French scientist named Henri Becquerel came across a natural form of radioactivity. As previously mentioned, many scientists were working on cathode rays, either to replicate Roentgen’s experiment or to answer the mystery of the tube itself.

Becquerel discovered that atoms of certain elements could disintegrate and form new elements when he was working on fluorescence. In his experiment, Becquerel used cathode photographic plates to record the results. On a cloudy day when he couldn’t get enough sunlight to continue his experiment, Becquerel stored his photographic plates, as well as some compounds he used, in drawers. When he developed the plates, he noticed that they were fogged as if they had been exposed to light. That wouldn’t be possible since he had wrapped the plates properly to prevent unnecessary light exposure before usage, so there must have been another source of light in the drawer. Even more bewildering, the light had passed through the tight wrapping.

As it turned out, only the photographic plates, stored in the drawer earlier with uranium compound, fogged while the others did not. He concluded that uranium was also able to emit radiation powerful enough to penetrate the wrapping.

More testing confirmed his hypothesis. The discovery of the radioactive property of uranium was therefore also accidental. Unlike the X-ray, unfortunately, Becquerel’s discovery did not receive the news coverage it deserved. Most scientists did not even learn of it right away.

The paradigm for radioactivity changed again two years later when Marie Curie, a Polish scientist, took great interest in Becquerel’s discovery. She was not convinced that uranium was the only radioactive element in the uranium ore (pitchblende).

Together with her husband, Pierre Curie – also a scientist – she conducted more experiments to find the other elements. She found two others, named polonium and radium; both were more radioactive than the uranium itself. Radium became the radioactive element of choice in the industrial application of radiography. It was so powerful it could penetrate a 12-inch thick solid object. Cobalt and iridium practically replaced radium by the late 1940s as those elements were more affordable and more powerful at the same time.

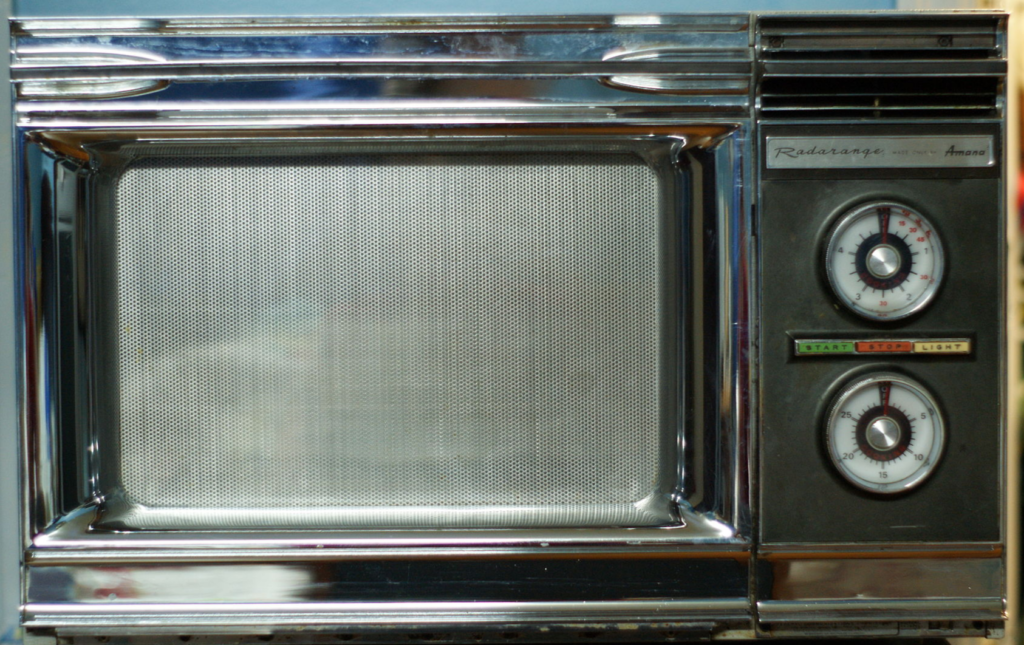

VI. Microwave Oven a.k.a The Microwave

VI. Microwave Oven a.k.a The Microwave

There is a microwave oven in the home of at least 90% of Americans. It is certainly one of the most popular kitchen appliances out there and there are very few reasons why it should not be. The person behind the invention of the microwave oven was Percy Spencer, an American physicist, who unintentionally discovered the basic mechanism of the now-widely-used electronic appliance in 1945.

Spencer had already been used to hard works in his teenage years before he decided to join the Navy to fight in World War I. After the war, he landed a job at the American Appliance Company which became later known for its involvement as an organizer of the Manhattan Project. This company changed its name to Raytheon in 1925. By this time, Spencer had already developed a reputation as one of the most valuable engineers the company had hired, despite having a very little formal education. During World War II, when Raytheon was working on a project to improve radar technology, Spencer was its regular problem solver. He had a great talent for pointing out manufacturing problems and coming up with simple solutions to solve them. He also played a big role in the development of a detonator, with which one could trigger artillery shells so that they exploded in mid-air rather than after hitting the target.

For the radar-improvement project, Spencer’s focus was on something called the magnetron, a high-powered diode vacuum tube. A radar magnetron is like an electricity-powered whistle, but it emits electromagnetic waves instead of sounds. It was in this project that the idea for the microwave oven came to him. He was trying to increase the power level of the vacuum tube, which naturally also involved testing the apparatus. In the middle of a test, he stuck his hand in his pocket to grab a peanut cluster bar; to his surprise, it had melted.

The Snack

Some versions of the story suggest that Spencer had pocketed a chocolate bar for his lunch break, but the truth was that Spencer loved chipmunks and squirrels, and he used to feed them during lunch with a peanut cluster bar. While this may seem like a trivial distinction, there is an important message to understand. Chocolate melts at a much lower temperature than water, around 80 degrees Fahrenheit (27 degrees Celsius). What Spencer’s peanut cluster bar encountered on the day of the discovery was a much more powerful energy.

Curious about what he saw, Spencer was inclined to do more tests with the magnetron. Instead of using another peanut bar, this time he used an egg, placed directly underneath the vacuum tube. As expected, the egg exploded. Spencer did more tests using corn kernels and ended up sharing the cooked popcorn with his colleagues in the office. The microwave oven was born.

Partly due to the fact that it was an accidental invention, Spencer was not aware of any potential health hazards from exposing foods to electromagnetic radiation. Let us not forget that the microwave oven was still brand new technology and in the development phase, so the information was simply unavailable. Spencer used the appliance to cook foods not because he was sure it did not pose any health hazards, but he had no information to assume otherwise.

Note: We now know that foods cooked in a microwave oven are as safe as those processed in the conventional electric oven, and they even have the same nutrient values. The main difference between the two appliances is that the heat emitted by a microwave oven penetrates deeper into the food than that generated by its conventional counterpart. The WHO (World Health Organization) confirms that foods cooked in a microwave oven do not become radioactive. According to the FDA (Food and Drug Administration), most injuries related to microwave ovens are due to burns from hot metals, overcooked or undercooked foods, and exploding liquids. Even when the injuries are radiation-related, they are most likely caused by improper servicing, such as gaps in the doors or openings that allow radiation to escape. Even under such circumstances, it would take a large amount of radiation to cause any harm. Modern microwave ovens manufactured and sold in the U.S. must comply with standards set by the FDA to prevent radiation leaks. All in all, modern microwave ovens are safe as long as they are used in accordance with the manufacturers’ instructions.

Note: We now know that foods cooked in a microwave oven are as safe as those processed in the conventional electric oven, and they even have the same nutrient values. The main difference between the two appliances is that the heat emitted by a microwave oven penetrates deeper into the food than that generated by its conventional counterpart. The WHO (World Health Organization) confirms that foods cooked in a microwave oven do not become radioactive. According to the FDA (Food and Drug Administration), most injuries related to microwave ovens are due to burns from hot metals, overcooked or undercooked foods, and exploding liquids. Even when the injuries are radiation-related, they are most likely caused by improper servicing, such as gaps in the doors or openings that allow radiation to escape. Even under such circumstances, it would take a large amount of radiation to cause any harm. Modern microwave ovens manufactured and sold in the U.S. must comply with standards set by the FDA to prevent radiation leaks. All in all, modern microwave ovens are safe as long as they are used in accordance with the manufacturers’ instructions.

Spencer and Raytheon called the invention “Radarange” and patented it. In 1947, two years after the discovery, the microwave oven became commercially available for the first time. It cost around $5,000 (or around $57,000 in today’s dollar). It did not even look like the microwave oven we all know today. Its original design was a medium-sized box with clear glass and some digital controls. The Radarange was a tad shorter than six feet and it weighed 750 pounds. It took 20 minutes to reach the desired temperature but was certainly much more powerful than today’s model.

The Radarange could cook a potato in approximately thirty seconds. Sales of the Radarange did not meet Raytheon’s expectations. Some people were reluctant to spend that much money for just a little more convenience in the kitchen, while others were terrified of the new technology’s potential health hazards. As a matter of fact, the Radarange microwave oven became Raytheon’s greatest commercial flop. Before the home-grade version was made available, Raytheon made some commercial-grade microwave ovens, including the “Raydarange” intended for restaurants and reheating meals on airplanes. Some of them required 1.6kW magnetron tubes and constant water cooling to prevent damages.

The microwave oven saw commercial success after Raytheon began licensing the technology in 1955. One of the first high-selling consumer-grade microwave ovens was the Tappan RL-1. Compared to the first models this was much cheaper, priced at $1,295 (about $12,000 in 2018). It would take more than a decade until Raytheon acquired Amana Refrigeration and started selling microwave ovens again under the Amana Radarange brand. Anybody could purchase the appliance for a modest $495 in 1967. As technology improved, the refrigerator-sized microwave oven was made smaller. Newer models were more manageable and increasingly popular, so much so that it actually surpassed the sales of gas-fueled models in 1975. By this time, Spencer’s invention was more often referred to as microwave oven than the Radarange.

Concerns about microwave oven safety preceded its widespread adoption all around the world. After testing more than a dozen microwave ovens, Consumers Union was conclusive that none were “absolutely” safe. Part of the reason for this conclusion was the absence of solid data about safe levels of radiation. To minimize risks due to manufacturing defects, Bureau of Radiological Health (part of the FDA) was tasked to safe-test all makes and models of newer microwave ovens. Some procedures in the test included:

- Slamming the door 100,000 times to see whether continuous everyday usage led to a radiation leak

- Filling cavities with cups of water

- Life test to figure out if specific models developed deterioration or degraded to a dangerous level

During its lifetime, a microwave oven should not degrade to the point where it emits five milliwatts of radiation. All microwave ovens must comply with the standards set by the bureau. The standards were put into effect on January 1st, 1971 for imported models and October 6th, 1971 for those manufactured in the U.S. Since then all microwave ovens are considered safe for household usage. That being said, it does not mean anybody can safely use the appliance for anything other than its intended purpose. Always read the manual that comes with your microwave oven and make sure it is regularly maintained or serviced to prevent health risks.

For his invention of the microwave, Spencer was inducted in the National Inventors Hall of Fame, sharing a spot with other big-name inventors including the Wright Brothers and Thomas Edison.

VII. Saccharin a.k.a Artificial Sweetener

VII. Saccharin a.k.a Artificial Sweetener

The discovery of saccharin (an artificial sweetener) started with more or less the same laziness as led to penicillin. Poor cleanliness in the chemical laboratory can cause disasters, but Constantin Fahlberg turned it into a serious money-making venture.

Working with Ira Remsen in the laboratory at Johns Hopkins University in 1878, Fahlberg certainly did not expect to discover the formula for the now-ubiquitous artificial sweetener product. One day after long hours in the laboratory, Fahlberg went home to enjoy homemade bread his wife had prepared for him. As he started to eat the bread, he noticed something different in how it tasted. He later asked his wife if she had used a different recipe, but she confirmed that nothing had been changed. That night, Fahlberg realized that the bread tasted notably sweeter than usual and wondered what had caused the difference. One of the only plausible explanations was that Fahlberg had not cleaned his hands properly in the lab, so there were some chemical residues on his hands.

Based on his own conclusion that he must have transferred certain chemicals from his experiments, Fahlberg was determined to figure out which chemicals had been left on his skin the next day in the lab. He began compiling all the lab equipment he had used before (which he did not wash either), and actually tasted them one by one. After this episode of unsanitary testing, he recognized the chemical that had made his bread taste sweeter the previous night: benzoic sulfinide. Fahlberg and Remsen published articles about the chemical in 1879 and 1880.

Several years later, Fahlberg was working in New York City and applied for a patent for the method of producing the artificial sweetener, or “saccharin” as he named it. In 1886, he began producing saccharin in a factory based in Magdeburg, Germany. The patent and popularity of saccharin would make Fahlberg a wealthy man.

Sugar Shortages

Although the scientific papers on benzoic sulfinide were published under both Fahlberg and Remsen, the patent did not include Remsen as one of the holders. As Fahlberg was commercially successful for the discovery, Remsen grew irritated and popularly made remarks that Fahlberg was a scoundrel and it nauseated him to hear the name Fahlberg and Remsen in the same sentence. In September 1901, Remsen was appointed President of Johns Hopkins University. He maintained the position until January 1913.

Saccharin was commercialized soon enough after its discovery, but it did not enjoy prolificacy until the shortage of sugar during World War I. Starting in the 1960s, its usage became popular among dieters, since saccharin is a calorie-free sweetener. One of the most popular brands of the sweetener in the United States is Sweet‘n Low, commonly found wrapped in pink-colored packages that are available at many restaurants.

Fahlberg was not the most hygienic scientist in the world and certainly not the most honest one either, because he didn’t honor Remsen’s contribution in the discovery of saccharin. Without his utter negligence of personal hygiene and poor cleanliness in the laboratory, we would never know that saccharin is, in fact, edible.

VIII. Polytetrafluoroethylene a.k.a Teflon

VIII. Polytetrafluoroethylene a.k.a Teflon

Thanks to Dr. Roy J. Plunkett and the tall order requested of him by DuPont, Teflon was invented. After graduating from Manchester University with a B.A. in chemistry in 1992 and receiving his Ph.D. in chemistry from Ohio State University four years later, Plunkett landed a job as a research chemist at DuPont, at the company’s Jackson Laboratory in Deepwater, New Jersey. At some point during his tenure, he was assigned to find various new forms of safe refrigerant to replace the old-fashioned, potentially toxic, environmentally dangerous ammonia and sulfur dioxide. Part of the job required him to synthesize chemical formulas to get the desired outcome.

Plunkett and his assistant Jack Rebok worked together on a potential alternative refrigerant called tetrafluoroethylene (TFE) in 1938. They synthesized around 100 pounds of the new chemical and stored the gas in small steel cylinders. On April 6th, 1938, the two men were preparing to work on the gas they had previously stored in the cylinder. As the cylinder valve was opened, the TFE was supposed to flow under its own pressure, but it did not seem to work. Curious about what had happened, they agreed to unscrew the cylinder valve to get a closer look inside. Plunkett tipped the cylinder upside down, only to get a small amount of whitish powder falling out of the cylinder. Since the cylinder felt quite heavy, as if something had been stuck inside, they took a wire and scraped the cylinder. Still nothing came out but more of the same powder as before. To inspect it even closer, they finally cut the cylinder open and saw more of the powder packed onto the lower sides and the bottom of the steel cylinder. Apparently, the TFE gas had polymerized into a white waxy solid form of polytetrafluoroethylene (PTFE).

Just like what any other good scientist would do, Plunkett decided to run some tests on the newly discovered chemical to see if it had any useful characteristics. As it turned out, not only did they accidentally synthesize a new chemical formula, but the new material also had quite impressive properties including high-temperature resistance, corrosion resistance, chemical stability, and low surface friction. As a matter of fact, the substance is one of the slipperiest in the world.

The new substance was deemed interesting enough that it deserved further study, and it was subsequently transferred to the company’s Central Research Department. Plunkett no longer had involvement, as DuPont assigned other scientists with more experience in polymer research to continue studying the substance. Plunkett himself was transferred to a separate department (Chambers Works), where he served as the chief chemist in the development of Tetraethyllead (tetraethyl lead) or TEL, a chemical additive to increase gasoline octane levels.

Kinetic Chemicals, founded by DuPont in partnership with General Motors, patented the new substance in 1941 and registered the Teflon trademark four years later. By 1948, the partnered companies were producing more than two million pounds of PTFE per year. During early development, however, Teflon was primarily used for industrial and military applications. One of the most notable applications was its use as the coating material for seal and valves in the pipe containing highly reactive materials in the Manhattan Project.

Less than two decades after the end of World War II, Teflon became more common and widely used in commercial applications as well as in fabrics and wire insulation. By the 1960s, as the technology improved and the cost to produce it declined, PTFE started being used as a coating in pans. It remains the most common use of the material until now. Teflon is not the only brand of PTFE in today’s market. There are other brands of the same material used in various household items and automotive products, for example carpets, windshield wipers, the coating on glasses, semiconductor manufacturing, hair treatment appliances, light bulbs, lubricants, and even stain repellant in furniture.

Other Facts about Teflon:

- When attending the Manchester University, Plunkett’s roommate was Paul Flory, who went on to win the Nobel Prize in 1974 for his work on polymers. Flory also worked at DuPont after graduating with a Ph.D. from Ohio State University.

- Teflon was once listed in the Guinness Book of World Records as the slipperiest substance in existence. It is no longer accurate, although Teflon is still the only substance to which geckos cannot stick their feet due to its natural Van der Waals forces.

- A molecule of Teflon consists of fluorine and carbon. Each carbon atom is attached to two fluorine atoms. Apparently, when fluorine is part of the molecular make up of a substance, the material repels other matter.

- Since the bond between carbon and fluorine in Teflon is so strong, the material is highly non-reactive when exposed to other chemicals. The Manhattan Project used Teflon as a coating in pipes and valves mainly for this reason.

- Teflon is one of the largest molecules. The molecular weight of PTFE can be more than 30 million atomic mass units.

- Most (if not all) newer models of non-stick pans are dishwasher-safe. You can scrape the surface with metals without damaging the coating. Older generations were not as well made as the newer ones.

- In the United States, the first Teflon-coated cookware was manufactured by Laboratory Plasticware Fabricators founded by Marion A. Trozzolo. His original design known as “The Happy Pan” is now preserved in the Smithsonian Museum. He also donated Teflon coating for the construction of the fence around Harry S. Truman’s home in Missouri.

For his invention of Teflon, the city of Philadelphia awarded Plunkett the John Scott Medal in 1951. It was one of the earliest professional honors he received for the invention. In 1973, Plunkett put his name to the Plastics Hall of Fame. He continued his tenure in DuPont until he retired in 1975. A decade after retirement, he made his way into the National Inventors Hall of Fame, putting his name alongside many other popular inventors in history. Plunkett died of cancer on May 12th, 1994 at the age of 83 in his home in Texas.

IX. Velcro

IX. Velcro

In 1941, a Swiss engineer named Georges de Mestral, went for a walk with his dog into the woods and accidentally saw something that would be the basic design of his historic brainchild, Velcro. After his walk, de Mestral realized that some burrs clung to his trousers and his dog as well. It occurred to him that he could probably turn the common spectacle into a more useful design.

So, the engineer put a burr under a microscope and took a closer look at it. He discovered that burrs are covered in many tiny hooks, which allow them to grab onto fur and fibers upon contact. After many years of research and development (because it was of course far from easy to make a synthetic burr), de Mestral managed to reproduce the natural properties of burrs using two strips of fabric: one strip fitted with thousands of tiny hooks and another with looped fabric. When the two strips were forced against each other (on the sides with hooks and loops), they stuck as if they were glued together. Unlike glue, however, the strips could be used over and over again without damaging them. He made the strips out of cotton at first, but later found that nylon worked better and was more durable. de Mestral formally patented his invention in 1955, 14 years later, and called it “Velcro” as in “velvet” and “crochet.”

During the early years after invention was released to the work, news reports often referred to Velcro as a “zipperless zipper.” de Mestral also founded a company called Velcro to manufacture his reusable fastener. It was (and still is) a trademarked brand. He later sold the company and his patent rights to Velcro SA. Even to this day, the official website of the company states that you should not use the term “Velcro” as a noun or verb as it diminishes the importance of the brand. The website also proudly gives reminds users that the company has lawyers at the ready to set the record straight.

Growing Popularity

In the 1960s, Apollo astronauts utilized Velcro to secure various devices in space. Because of this, many people were led to think Velcro was one of NASA’s inventions too. It is not correct. Although NASA played a major role in setting the path for worldwide adoption of the fastener, the agency did not have any involvement in the invention of the product or its development.

Velcro has always been a reliable fastener indeed, as proven by those astronauts, who used it to secure food packets and any equipment they did not want floating away. Airplanes used Velcro to secure flotation devices and hospitals affixed the product to a large variety of medical instruments, including blood pressure gauges (the band strapped to your arm). As popularity grew, people began using Velcro for all sorts of household applications from bundling wires to securing pictures to the wall.

A fashion show held at the Waldorf Astoria Hotel in New York City in 1959 displayed various Velcro-fitted items including diapers and jackets. A report published by the New York Times showed great appreciation for the fastener and praised it by declaring that Velcro would eventually end the use of safety pins, zippers, snaps, and even buttons. The original design only came in black, but the fashion show displayed Velcro in many different colors. In spite of that, consumers thought it was too ugly, so its functions were relegated to the world of athletic equipment for quite a long period.

A blatant lack of aesthetic appeal prevented many people from using Velcro as fashion accessories. In other words, fashion companies simply refused to make Velcro-equipped products. In the late 1960s, things started to get better for Velcro. Puma became the first big name to offer shoes with Velcro fasteners instead of the more common laces. The fact that the product was from a major brand increased Velcro’s popularity incredibly. Other shoe companies including Reebok and Adidas followed suit soon enough. By 1980s, the vast majority of children in the United States had Velcro-fastened shoes.

Unfortunately for Velcro, the patent had expired before worldwide adoption came along. Many other companies all across Europe, Asia, and Mexico were already making knock-off versions by then. For this reason, Velcro has been persistent to this day about the use of its trademarked brand; anybody can make hook-and-loop fasteners, but only Velcro makes Velcro.

Another major publicity of the product came to surface in 1984, his time by David Letterman. While wearing a Velcro suit, he interviewed the director of sales of Velcro USA. At the end of the interview, Letterman launched himself with a trampoline onto a wall and stuck to it! Several years later, a New Zealand bar owner organized a similar stunt, but the Velcro and the jumping equipment were meant for his customers to participate in a “human fly” contest. Velcro jumping activities became more common by 1992 in the United States, and while it never gained serious popularity, you can probably still see the same attraction in some fairs or carnivals all around the world.

One specific application where Velcro should work well but it turned out to be ineffective was in military battle uniforms. The ACU (Army Combat Uniform, or the Airman Combat Uniform) introduced Velcro-fitted models in 2004. Soldiers did not like it very much as their name tags often fell off, and the loud ripping noise of the strips made it more difficult to hide from enemies in combat. The strips with loops were also dust magnets and often clogged with dirt. In many warzones, dust is a constant.

One specific application where Velcro should work well but it turned out to be ineffective was in military battle uniforms. The ACU (Army Combat Uniform, or the Airman Combat Uniform) introduced Velcro-fitted models in 2004. Soldiers did not like it very much as their name tags often fell off, and the loud ripping noise of the strips made it more difficult to hide from enemies in combat. The strips with loops were also dust magnets and often clogged with dirt. In many warzones, dust is a constant.

It has been more than 60 years since de Mestral patented Velcro. You would assume that the fastener has become outdated as newer more reliable models are now available, but this is not the case. People continue to create new ways to use the fasteners with everyday items. Teenagers now attach their electronic tablets with Velcro and secure it to a desk, mothers use Velcro to attach photographs to the wall, and cyclists wear digital watches fastened to their hands with Velcro straps, among others. For his invention de Mestral was inducted in the National Inventors Hall of Fame in 1999.

X. Safety Glass

X. Safety Glass

Even if you’re not working at a laboratory as a chemist, chances are you understand one of the most important rules in the lab: make sure research equipment and tools are properly cleaned after every usage. Chemical residue in glassware containers or eye droppers will inevitably render the next experiment (with the same equipment) useless or inaccurate because the tools are contaminated with unwanted substances. In a worst-case scenario, a mixture of certain chemicals can trigger an explosion in the lab, causing injuries or even death. Despite the warnings, however, mistakes and accidents do happen. Once in every great while, fortunately, poor cleanliness does not mean a disaster. Sometimes it leads to a great discovery.

Take the invention of the safety glass as an example. In 1903, Edouard Benedictus, a French scientist, was trying to find specific chemicals from a high shelf in the laboratory. He took a ladder, climbed to the top shelf, and reached for the container he needed. He accidentally knocked over glassware from the shelf below, and it fell to the floor. Normally, the glassware would shatter to pieces, but this particular container didn’t.

Cellulose Nitrate

As a matter of fact, the container still retained its general shape, although the glass itself appeared to be cracking all over, as if every piece was held together with a layer of adhesive. After taking a closer inspection of the container, Benedictus realized that one of his assistants had not properly removed a chemical residue from inside the container. It was an act of laziness or just poor diligence, but either way the assistant committed a blatant violation of one of the basic rules of working in a lab.

The glassware had been used before to contain cellulose nitrate, but improper cleaning left a little bit of residue inside. Apparently, the residue evaporated and coated the inside surface of the glassware over time. The coating formed a thin layer of film, which held the broken pieces together. Based on his findings, Benedictus later developed an improved shatter-proof glass design. Using the same formula, he made safety glass by placing a thin layer of celluloid between two pieces of glass. Through trial and error, he managed to get everything right eventually. Benedictus filed a patent for his laminated glass design in 1909 – six years after the initial discovery.

Two years after he had filed the patent, he started manufacturing the shatterproof glass composite for commercial application, particularly for cars, to reduce injuries in accidents. Automobile manufacturers were slow to adopt the material, mainly because the composite was still expensive. One of the first successful adoptions was by the military, for which laminated glass became a primary component of eyepieces in gas masks in World War I. As the product gained popularity, and the automobile industry was simultaneously beginning to rise, more cars were fitted with the shatterproof glass designed by Benedictus, thanks to his assistant’s laziness.

XI. Vulcanized Rubber a.k.a Rubber

XI. Vulcanized Rubber a.k.a Rubber

Before Columbus “rediscovered” rubber and introduced it to the western culture in general, this naturally elastic substance had been used for many centuries, albeit not commercially. Natural rubber is harvested from the sap coming out of the bark of its tree. The term “rubber” originated from the use of this substance in pencil erasers. It could rub out marks made by pencil, hence the name.

During early years of discovery, rubber was used for many products including balls, containers, and as an additive for textiles. One of the most troubling downsides was that rubber could not withstand extreme temperatures and it became brittle in cold weather and sticky in too much heat. In its raw form, natural rubber is just a thick sap drained from Hevea brasiliensis (rubber tree), grown in tropical areas such as Brazil. Currently, Malaysia and Indonesia are two of the biggest suppliers of natural rubber.

Despite its obvious drawback, rubber was a major commodity in the early years of the Industrial Revolution. When mixed with acid, the thick liquid became malleable enough to transform into all sorts of shapes and forms. The world had not seen a method to make rubber more reliable in industrial settings until Charles Goodyear made an accidental discovery of the right formula to process the thick sap in 1839. Beginning his career as a partner in his father’s hardware business, which eventually went bankrupt in 1830, Goodyear turned his interest to rubber processing. His purpose was quite simple: to find a way to produce rubber without the previously known negative properties.

Goodyear’s first major breakthrough happened in 1837, when he figured out that nitric acid could make natural rubber dry and smooth. While not perfect, it was better than anything that had come before in the rubber processing industry. He was granted several thousand from a business partner to begin production, but the financial panic of the year turned the venture into a failure. Goodyear and his family actually lived in an abandoned rubber factory in Staten Island for a time, surviving mainly on fish. He received another backing in Boston, and this time he was contracted to produce rubber mailbags for the government. After a momentary prosperity thanks to this project, the nitric acid formula turned out to be another failure. The mailbags melted when exposed to high temperatures.

After long years of almost total futility, Goodyear moved to Woburn, Massachusetts. Farmers around where he lived were generous enough to give his children milk and food. His greatest discovery happened in February 1839. He was in Woburn’s general store to demonstrate his latest (albeit failed) formula in rubber processing. It must have been quite an unusual day for Goodyear because the typically mild-mannered man was apparently pretty excited in the store. He waved a lump of sticky rubber in the air and for some reason the material landed on a hot stove. He scraped it off, and what happened next caught him off guard. Instead of melting like syrup, the rubber charred. Around the charred area, the rubber remained springy and dry.

Goodyear still had to figure out the reason behind the new textures, so he could develop the right formula to replicate the accidental process he had come across earlier in the store. At last he did: the rubber must be mixed with 8% sulfur along with lead to act as an accelerant and heated on steam under pressure at 270 degrees Fahrenheit for up to 8 hours. The process gave him the most consistent results. He filed the patent for it on January 30th, 1844.

Thomas Hancock, a self-taught English engineer and pioneer in the rubber industry, filed for an English patent for the same rubber processing on November 21st, 1843, about eight weeks ahead of Goodyear. According to Hancock, the idea came from a sample of material shown to him by William Brockendon – a friend of his – who had acquired it from America. The sample material was rubber treated with sulfur. The term vulcanization was also Brockendon’s idea, inspired from Vulcan, the Roman god of fire.

Legal battles for patent rights were persistent troubles clouding Goodyear for the rest of his life. He made bad deals with other parties, earning him only a fraction of what he should have received for his discovery. When Goodyear died in 1860 at the age of 59, he left debts of around $200,000. In 1898, a rubber company based in Akron, Ohio called itself Goodyear Tire and Rubber Co., in honor of Goodyear, the man who invented the rubber vulcanization process. Some people made and continue to make millions out of his idea.

Goodyear was inducted into the National Inventors Hall of Fame in 1976. His legacy lives on, partly thanks to Frank Seiberling, the founder of Goodyear Tire and Rubber Co., although the company was never connected to Goodyear or his descendants.

Product design on demand with Cad Crowd

Product design on demand with Cad Crowd

Do you have a new invention idea or consumer product that you plan to develop. Our vetted community of 3D CAD designers and freelance engineers and industrial designers are available on demand. Contact us or send your project details on the quote form for a free design quote.

Good day, I want to introduce my inventions to you

but man was I wrong

some of these I thought were made on purpose